How to Work Around Biased B2B Information Sources

B2B Tech Buyers are Wary of Biased Information Sources

As a B2B technology buyer, you seek sources you can trust to give you the full scoop on a product — how to use it as a secret weapon, all of its shortcomings, how well it plays with other tools, and what kind of ROI you can expect.

You’re looking to feel secure in your choice. You know there’s no magic bullet — and you wouldn’t trust any source that claimed to be one.

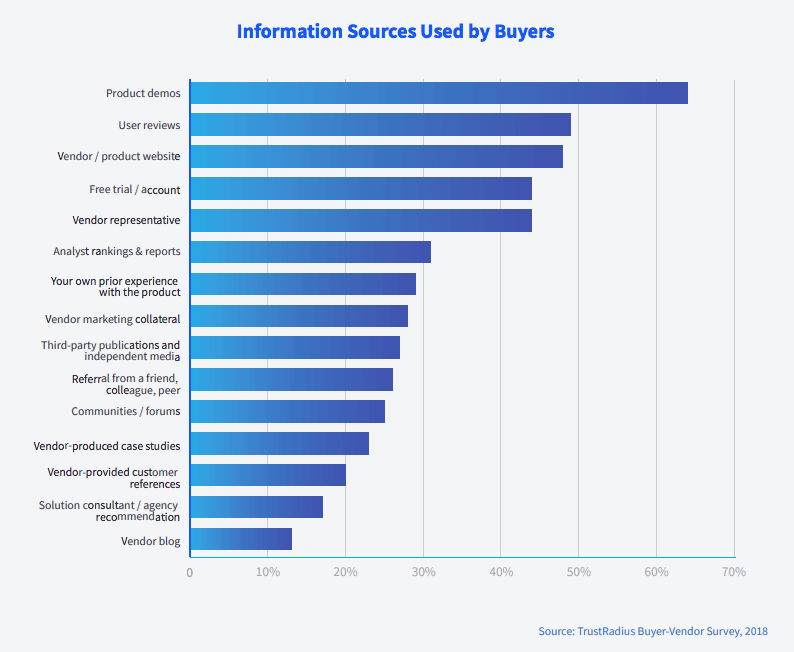

In fact, according to a recent survey we did of 438 B2B technology buyers, the average buyer uses 5 different sources of information, because no one resource is perfectly adequate or trustworthy.

The top five resources used by buyers were product demos, user reviews, vendor/product websites, a free trial or account, and vendor representatives.

Buyers also used resources like analyst rankings & reports, prior experience with the product, vendor marketing collateral, third-party publications and independent media, referrals from friends, colleagues or peers, communities/forums, vendor-produced case studies, vendor-provided customer references, solution consultants or agency recommendations, and vendor blogs.

As buyers consult these diverse resources, they pay close attention to potential biases, evaluating not only the products but also the credibility of sources.

Bias, it turns out, is one of the main factors linked to lack of trust and lack of influence.

The survey asked buyers to rank the information sources they used on two dimensions: trustworthiness and influence over their purchase decision. When we asked buyers what was wrong with the least influential resource, and why it factored in less than other resources, “Biased” was the #3 most mentioned word (behind “Vendor” and “Product”). It was the #4 most mentioned word when we asked what was wrong with the least trustworthy resource (behind “Trust,” “Vendor,” and “Product”).

Bias Examples That Are On Buyers’ Radar

In the B2B buying process, not all biases are created equal. Understanding the way other buyers think about bias, and how to account for and compensate for bias in various resources, can help you get the full picture you need to buy confidently.

Here’s a closer look at some of the different types of bias buyers who took our survey care about, and how bias impacts the trust and influence they place in different resources.

Vendor Bias

Selection bias in customer evidence shared by vendors.

Selection bias is when a sample of individuals or data is chosen in a non-random way, so that the picture is skewed rather than representative. Vendors often share customer evidence (like case studies, references, ROI benchmarks, etc.) that they’ve selected because it shows the ideal use case for their product, with optimum success.

Buyers recognize that vendors’ sampling methods are biased towards flagship customers that have the most impressive results and with whom they have the closest relationships. They perceive a strong selection bias, which is why vendor-produced case studies and vendor-provided customer references were ranked the two least-trustworthy and least-influential forms of customer proof.

Vendor provided resources will often be skewed in their favor and typically highlight “best-case” scenarios.

We expect vendors to provide the most stellar reviews they receive… so it’s not necessarily a real sample of reviews.

References provided by the vendor themselves can be skewed at times.

The least influential sources were too biased since they were created by the vendor themselves. Their results/ insight were likely based on cleaned data in an ideal scenario- geared towards promoting the product’s strengths but not transparent about it’s limitations.

White papers and product demos are helpful, but any company selling a product will choose case studies that show them in a good light and will demo the most cutting edge functionality, whether or not it actually fits our use case.

Suggestions to combat selection bias:

- Look elsewhere for more representative samples (such as on review sites, in customer forums, peer recommendations, independent case studies, etc.) to figure out what the average customer really thinks about the product, and find perspectives from other customers with specific use cases similar to your own–not just the best-case scenarios.

- Source your own independent customer references on LinkedIn. You may be able to find people with the right roles who work at the companies touted on the vendor’s website, or people who specifically mention the product in their job description or skills section. Not everyone who you reach out to will respond, but many people you find will be flattered by your interest in their expertise, and their viewpoint will be more off-the-cuff and less polished than the vendor’s hand-picked references.

Motivational bias in salespeople and vendor collateral

Motivational bias is when someone is incentivized to see or do things in a particular way based on their circumstances. Usually people are aware of their own motivational biases.

In the B2B buying process, many buyers see vendor representatives and collateral created by vendors as self-promotional with a clear financial incentive. They’re tasked with selling a product, so of course, they are biased in favor of the product and want it to come off in a positive light.

This limits the amount of trust and sway vendors have over buyers. While buyers see vendors as the best source on things like product details, positioning, and pricing, they seek insight into product limitations elsewhere. Many aim to validate vendors’ claims around ROI, implementation and adoption, etc. with information from other resources with less skin in the game.

I tend to assume a bias when speaking with a vendor representative.

Marketing collateral has an agenda: sell the product. It is useful for identifying features, but it’s hard to trust it as an objective source.

Websites can be helpful for finding objective facts like technical specs, etc, but at the end of the day they’re a marketing tool and therefore not going to give you the full picture.

The vendor resources are usually selling pitches which suppress competitive knowledge and self shortcomings. Also vendor resources are not always tuned to specific customer requirement as each business is different.

We used vendor materials to understand the best capabilities of the product but kept in mind their bias and unlikelihood of naming limitations.

Suggestions to combat motivational bias:

- Ask tough questions to really probe around product limitations.

- Try to break the product with a free trial (and then ask questions about the limitations you find).

- Get feedback directly from current users (reviews, reference calls, etc.) to validate vendor claims and get ideas for new questions to ask.

- Check out the competition to see if you can suss out differences in features and positioning.

Analyst Bias

Familiarity bias in analyst rankings & reports

Though some buyers see analysts as an unbiased, objective resource, others feel analysts don’t quite live up to that promise. Because analysts purport to give an unbiased, in-depth view of the market, sometimes at a significant cost to the buyer, buyers are especially critical of any potential biases or lack of detail.

In our survey, skeptical buyers said they worried that individual analysts’ subjective opinions might have too big an impact on the results, that there is a commercial bias towards vendors that spend more time and money with the analyst firm, and that analysts are overly focused on the very established players with whom they’re already familiar.

Familiarity bias, or a preference for investing in well-known companies/products rather than newcomers or more diverse options, creates a limited view of the market, and may lead analysts to pay less attention to solutions that don’t fit the typical enterprise use case.

Analyst rankings are subjective to how the analyst perceives the marketplace. At times this can be a very limited view.

People who are more familiar with certain products are more biased.

Analysts content can be biased by client relationships.

Analyst reports tend to be focused on software for large to mid-sized business. I didn’t find their recommendations particularly helpful for our small business.

Least trustworthy would be analyst rankings. I feel they are too broad and while it’s nice to know your product is in an upper quadrant, sometimes the other quadrants will meet your needs. You may only be looking for lite functionality at a reasonable cost.

Suggestions to combat familiarity bias:

- As you build your long list (or your short list) of products to consider, look beyond the veil. Consult crowd-sourced resources like review sites, forums, Siftery, etc. and resources like Datanyze and Crunchbase that cover market share and company status even for smaller players.

- Read blogs written by industry influencers, and keep an eye on tech news outlets for announcements about new and niche products.

- Validate analyst commentary with your own hands-on product evaluations and feedback from current users (in the form of reviews, reference calls, etc.).

Review Bias

Subjective bias & subjective validation in reviews

Most buyers see product users and their reviews as unbiased—according to a recent poll on TrustRadius, 83% trust user reviews. But some do call them biased. Both sides agree that unlike vendors, reviews aren’t trying to sell anything, and unlike analysts, reviews don’t claim to be objective.

User reviews are, fundamentally, opinions, shaped by situational and dispositional constraints like where the reviewer works, their budget, their level of competency and responsibility, their workstyle, and the other tools and systems they use. Bias in reviews is about subjectivity or one person’s opinion.

Sometimes user reviews can be biased by personal preferences.

Lots of people are incapable of separating a problem with the product from a problem with their usage of the product.

I do not trust user reviews very much. There is so much I do not know that impacts their opinion.

Often, buyers put more stock in reviews when the reviewer’s use case, level of experience, or role matches their own. (There has to be a certain level of detail in the review and about the reviewer, of course, to affirm this.) This is subjective validation. When it’s not clear where the reviewer is coming from, or the reviewer’s use case does not match their own, buyers are more likely to scrutinize the review and consider the feedback provided more questionable or unreliable.

None of them were in our exact situation.

Other people’s opinions are subjective, use cases and situations differ.

Good to read reviews but hard to know who is biased, if the company leaving the review matches the needs of our own, and if [the vendor] had any influence in the reviews.

Suggestions to combat subjective bias:

- Look for reviews that allow you to understand the reviewer’s POV. This might be detailed in the review itself–such as their use case, which version/modules of the product they’re using, what results they’ve seen, what other products they’ve had experience with, etc.–or it might be metadata about the reviewer that gives demographic or firmographic context. Some review sites display this information even for anonymous reviews.

- Seek out reviews from other users like you, with similar roles/titles, company sizes, industries, etc. Some review sites offer advanced filters to make these easy to find.

- Look for trends across all reviews. While people with different use cases won’t agree on everything, they do tend to agree on some things. The patterns in differences of opinion you notice can also be informative–for example, you may not find any reviews from companies quite as large as your own, but if see lots of small business users complaining that a product is too complex and too expensive, with fewer complaints from users as company size increases, that may be a sign that it’s more suited for larger companies like yours.

Confirmation bias in reading reviews

Another, related, phenomenon is confirmation bias–or feeling more strongly that something is true because it matches your own viewpoint. This type of bias actually leads buyers to trust some reviews more, when the reviewer echoes the buyer’s opinions about a product, or prior experience with a product.

I remember finding one [review] which corroborated my experience perfectly and told the truth all round.

But some buyers purposefully seek out sources that don’t match their own experience, to make a stronger objective case.

I tried not to let my own experiences with particular products influence the decision for my team. I wanted to fairly judge all of our options.

Most trustworthy resources are the ones that are based on my own, subjective experience. Other peoples’ reviews also tend to be informative as they provide a different user perspective.

Suggestions to combat confirmation bias:

- Compare the reviews you most strongly identify with to the other reviews you can find. Are the opinions expressed in the reviews you’re drawn to shared by other reviewers? If not, is there a reason for the difference in opinion? Do they tell a clear story related to use case or level of experience?

- Share the reviews with others in your buying group (or with a trusted colleague, if you’re evaluating products solo). See what stands out to them–is it different than what stood out to you? It can be helpful to discuss reviews with other stakeholders who have slightly different perspectives.

- Put your takeaways from reviews to the test! Ask the vendor questions based on what you read in reviews, and if you get to speak with a customer reference, ask for their two cents as well. Request a product demo or free trial so that you can play around with specific areas of the product that were mentioned in reviews. That way, you’ll be able to see for yourself.

Commercial bias on review sites

Several buyers who took our survey noted that they watch for commercial bias on review sites (advertisements, paid placement, rankings based on business relationships, etc.) and that they see this as a weakness, leading them to prefer other review sites.

Any which had significant adverts really put me off. The significance of a good review site is a guarantee of no commercial bias. I don’t know how this could be certified because I’m skeptical of almost everything on the net!

Some sites I thought were biased. Others were listing out of date information, so I didn’t trust them.

Some were very cluttered and didn’t easily show the feature comparison. Some were littered with ads and when I’d click to further explore I’d be send through some affiliate link loophole. The ones I enjoyed were clean and simple and allowed me to easily see just the tools I was comparing side by side.

Suggestions to combat commercial bias:

- Pay attention to the logic of how products are ranked and displayed. Are products sorted by objective criteria like number of reviews, search traffic, or star rating? Or is the logic fuzzy/unclear? These optics can subtly influence your perception of products. Stick to sites where you can understand why things look the way they do.

- If the site is recommending or recognizing certain products above others, make sure it’s based on specific factual criteria.

- Sniff out the review site’s business model. How do they make money? Do they sell leads (aka buyer contact information), premium placement, or ads to vendors? Does whether or not they have a paid relationship with a vendor impact the product’s score or visibility on the site, relative to other products? Can you find (or write) reviews of products regardless of whether the vendor is working with the review site? If you aren’t sure, check the site’s About Us section for a mission statement, quality assurance policies, and/or promises to site users. You may also want to check the company’s blog and LinkedIn profile to see how they position themselves.

Is the Truth about Products Subjective?

Ultimately, what buyers want is to determine whether a product will work for their business. Having a subjective point of view can be a resource’s greatest strength, or it can be a severe limitation to trustworthiness and influence in the minds of buyers. It all depends on whether the perspective is clear, and how it aligns with the buyer’s own perspective.

Buyers know that all sources have their biases. As one buyer said in the study, “Trust in any one resource is hard but common information across multiple sources gives credibility.”

Keeping in mind the type of bias a resource might have, and using these tips to suss them out, can go a long way in piecing together the full truth about the product—or the truth that’s most relevant to you.

Was this helpful?